Section 2

Chapter 3

Splinternet as a “lived experience”: a user’s sovereignty inside authoritarian networks

Researcher, eQualitie

By Ksenia Ermoshina, a senior researcher at theCenter for Internet and Society of the CNRS, eQualitie and The Citizen Lab of the University of Toronto. She is also conducting user-research, community work, and field testing for decentralized free software projects such as Delta Chat, Ceno browser, and Ouisync. Ksenia holds a PhD in socio-economy of innovation from Mines Paris Tech. Her research interests lie on the intersection of STS and network measurements, with a focus on studying information control and circumvention technologies in war and conflict zones.

We often speak of splinternet from a geopolitical perspective or internet governance point of view. But here is the thing: people already live and work inside splinternets for many hours, days or months. We do not live on the same Internet even if we live in the same country. In a splintered network, the online content appears as different for different groups and can depend on class, gender or ethnic specificities. Approaching the concept of splinternet from an angle of lived experience offers a different lens to measuring the impact of digital fragmentation. Censoring marginal groups leads to building isolated informational bubbles, introducing dramatic inequalities in the experience of Internet usage. This fragmentation of online experiences is further exacerbated by introducing tiered Internet, as it happened in Iran in January 2026, where elites could access the global web while the majority of the population was locked inside the NIN.

Shutdowns and splinternet are real world experiences that affect how we feel, physically and mentally, how we talk to our family and friends, how we do our jobs and perceive events around us. Some of the unexpected consequences of splinternet include direct harm to health. For instance in Russia the app to track insulin level in school kids stopped working when the government introduced mobile shutdowns and whitelists. Parents lost track of their children’s health data which led to emergency situations endangering the lives of these children. Other effects of mobile shutdowns include people getting killed by drones because the drone alerts hadn’t been delivered due to mobile shutdowns. It therefore becomes urgent to understand splinternet and individual digital sovereignty at this material, personal and bodily level.

At SplinterCon Paris we heard several first-hand accounts of living under tiered censorship and digital fragmentation: from the soviet censorship of journals and books to the contemporary cases of Ukraine or Venezuela. Besides, a dedicated panel on the topics of gender and sexuality as a factor of informational isolation covered the experiences of women and LGBTQI people and their specific experiences of “algorithmic invisibility”, as Hannes-Jeremia Jaacks has pointed out in his presentation. These accounts demonstrate that marginalised users appear to exist inside even more isolated online environments, where information is filtered even more intensely than for the majority of the population inhabiting the same territory. Our extended study of the effects of cyber occupation of Crimea including data from OONI explorer about blocking of websites in Crimea shows how Crimean tatar (the indigenous people of Crimea) were exposed to a much stricter censorship than other Crimeans. LGBTQI groups living in countries with homophobic laws are also subject to double censorship, and many of them opt in for digital migration to more secure alternatives. The testimony from Delo LGBT presented at SplinterCon demonstrates the need for Russian queer groups to migrate to the federated social media platforms and decentralized messaging solutions, to be able to continue publishing information about their activities and other LGBT related news. In sum, the authoritarian shift for centralized control of the Internet is experienced in a different way by those who have less privilege.

Venezuela : A Laboratory of Repression and Resistance

The presentation “Venezuela: A Laboratory of Repression and Resistance” by Laura Vidal provided a detailed exploration of how digital authoritarianism is experienced in Venezuela. In Venezuela, information control is not confined to a single tool but emerges from a complex web of interconnected systems. This includes both visible and invisible controls, such as infrastructure disruption, bureaucratic barriers, and state propaganda, which collectively shape the digital and physical reality of Venezuelans. Political actors, journalists, and activists face an intense version of surveillance and harassment, which has become more pervasive over time.

The government has also used legal and administrative tools to undermine the public sphere long before digital repression took hold. Over 400 media outlets have been closed since 2009, creating information deserts across the country by 2024. Laws such as the “Anti-Hate” Law (2017) and the “Anti-NGO” Law (2024) have been selectively used to criminalize opposition and further suppress free expression. These legal frameworks laid the groundwork for the rise of digital repression, as they provided the legal cover for online censorship and surveillance.

Venezuela’s fragile infrastructure is paired with a massive surveillance regime, highlighted by the 2021 Telefónica/Movistar transparency report. This report revealed that 20% of phone lines were monitored, with over 860,000 interception requests and nearly 1 million metadata requests—all done with no judicial oversight. This massive interception regime continues to shape the state’s ability to maintain control over its citizens.

The Venezuelan government has also tied identity to political loyalty through the Carnet de la Patria, a national identity card that links data collection to access essential services. This system, which encourages citizens to demonstrate political loyalty in exchange for services, makes it easier for the state to control behavior and punish political dissent. It represents a powerful tool of social control, where citizens are made dependent on the state for basic welfare.

The 2024 election crisis in Venezuela led to both physical and digital repression. The aftermath of contested election results saw 915 protests, 21 deaths, and over 2,400 detentions. To suppress transparency, the government blocked key websites, including tally-sheet websites, which would have helped track the election results. The use of new technologies such as Autel drones for facial recognition, VenApp for denunciations, and the circulation of forced confessions via TikTok and YouTube added a new layer of intimidation, reinforcing traditional tactics of public shaming and coercion.

Women, in particular, have faced intensified online and offline harassment. Female activists and candidates, especially those from marginalized communities, were disproportionately targeted, facing gendered attacks, including threats of sexual violence. Research found that women in Venezuela were subjected to 60% more gendered insults than their male counterparts, and community-level denunciations often affected women in poverty and Indigenous women leaders. These gendered forms of repression exacerbated the already harsh conditions under which Venezuelans live.

Despite the repression, civil society in Venezuela has found ways to resist. Organizations such as Espacio Público, Provea, and Conexión Segura y Libre have worked to document and expose the government’s actions. They focus on censorship mapping, disruption tracking, and propaganda analysis to produce public evidence of the state’s abuses, despite the constant risks of harassment, surveillance, imprisonment, and exile. Resistance also takes offline and hybrid forms, with initiatives like El Bus TV, which provides news to communities via public transportation, bypassing digital filters. Similarly, ARI Móvil brings reporting to communities that lack independent media. The app Noticias Sin Filtro has been developed by Conexión Segura y Libre to offer Venezuelans uncensored access to major news outlets.

In the face of digital repression, informal information networks have also emerged. Venezuelans curate and share information through private messaging apps where they engage in fact-checking, audio summaries, and social filtering. These networks play a crucial role in bypassing censorship and facilitating the free flow of information, despite the state’s best efforts to control it.

Venezuela’s experience is part of a broader pattern of cross-regional alliances where authoritarian tactics are shared across regions, and so are the strategies of resistance. Parallels can be drawn between Venezuela and Colombia, Palestine, and Senegal in terms of their experiences with shutdowns and digital repression. These alliances help share knowledge and bypass the traditional North-South hierarchies that often divide global resistance efforts.

Finally, digital third spaces have emerged as places of resistance that are neither strictly national nor global. These spaces are transnational, fostering safety, shared understanding, and learning. They offer community-driven support, allowing for the exchange of knowledge and ideas, and represent a critical space for strengthening resistance against digital authoritarianism globally.

The lived experience of digital authoritarianism in Venezuela shows how deeply the state’s power permeates everyday life, with technology and legal frameworks working hand-in-hand to enforce control. Yet, despite the state’s digital tools of repression, Venezuelans continue to resist, often with innovative and community-driven strategies, providing important lessons for others facing similar digital authoritarian threats.

Living Under Cyberoccupation: Ukrainian Experience from the Frontline

In her presentation “Living Under Cyberoccupation: Ukrainian Experience from the Frontline”, the award winning Ukrainian journalist Anna Romandash explores the intense experience of Ukrainians under cyberoccupation, highlighting the impact of voluntary shutdowns, surveillance, misinformation attacks and digital censorship throughout the ongoing conflict with Russia. The presentation examines the interplay of infrastructural disruption and physical warfare, showing how information control is deployed by Russians to enforce control, monitor citizens, and disrupt communication on the occupied territories of Ukraine.

Following the annexation of Crimea and the start of armed conflict in eastern Ukraine in 2014, the Russian state has been occupying physical connectivity infrastructures, such as cell towers or Internet service providers’ offices, in order to control and manipulate online spaces, disrupt communications, and suppress independent journalism. These efforts have included targeting telecommunications networks, spreading disinformation, and employing sophisticated censorship technologies that have been evolving over time. In Section 2 of this report we have seen how Russia hijacked the Ukrainian traffic, built its own backbone cables and progressively replaced it with Russian traffic (see Ermoshina, 2022). Anna Romandash further examines this tactic of cyberoccupation, as it has been unfolding after the beginning of the full-scale invasion of Ukraine.

A particularly striking case of cyberattacks occurred on December 12, 2023, when Russian hackers attacked the core infrastructure of Kyivstar, a leading Ukrainian telecom provider. This attack resulted in the destruction of 40% of the company’s infrastructure, affecting millions of mobile subscribers and home internet users. However, critical communications for military operations were largely unaffected, highlighting the resilience of military networks. Despite these successes, the financial losses were significant, amounting to $95 million for the company.

In addition to cyberattacks, the Russian government has extended its technologies of censorship and surveillance to the occupied territories. Crimea and the newly occupied territories of Eastern Ukraine experience more extensive filtering than the Russian territories. The Russian authorities also impose the usage of MAX to the occupied territories of Ukraine. Max is a Russian government-created super app which does not support end-to-end encryption.

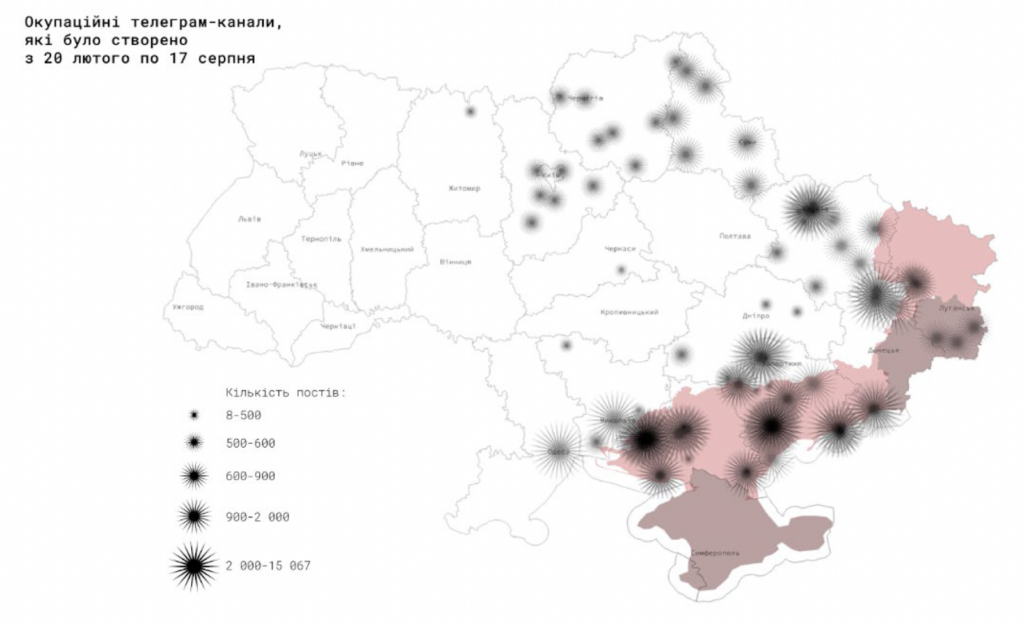

Besides, Anna Romandash describes a massive disinformation campaign which employed dozens of Telegram channels, created to impersonate authentic Ukrainian resistance groups or news channels. These channels were used to spread pro-russian agenda, disinformation, manipulate the public, and disrupt the flow of accurate information. Russian-backed forces deployed these channels in the cities close to the frontline, to undermine Ukrainian efforts and further confuse the narrative by pretending to represent resistance movements. The fake Telegram channels represent a clear example of how disinformation and digital manipulation are used in hybrid warfare to control the public discourse.

The experience of occupied Ukrainian cyberspace is an experience of an informational bubble, which is subject to disinformation, bot-produced content, high level of phishing and other cyberattacks, but also physical risks of control, device seizure and searches. There is no peace in this cyberspace, it is dangerous to navigate, and Ukrainians from the occupied territories have to deal with permanent risks and informational isolation.

In his presentation at SplinterCon, Hannes-Jeremia Jaacks, researcher at Women on Web (WoW), which has provided online abortion support for over two decades in more than 180 countries, explained how access to abortion care has increasingly migrated into digital space—and how that shift has exposed women to a new form of structural control: algorithmic invisibility.

Search engines become the new driver of fragmentation and a building block of online walled gardens. Indeed, as Hannes-Jeremia puts it, “an uncensored website is useless if no one can find it”. As clinic closures and abortion bans proliferate internationally, especially in highly restrictive contexts, the internet has become a primary channel through which women seek information about medical abortion using mifepristone and misoprostol. Yet the very infrastructures that mediate access to this information—search engines, advertising systems, and social media platforms—have emerged as powerful gatekeepers.

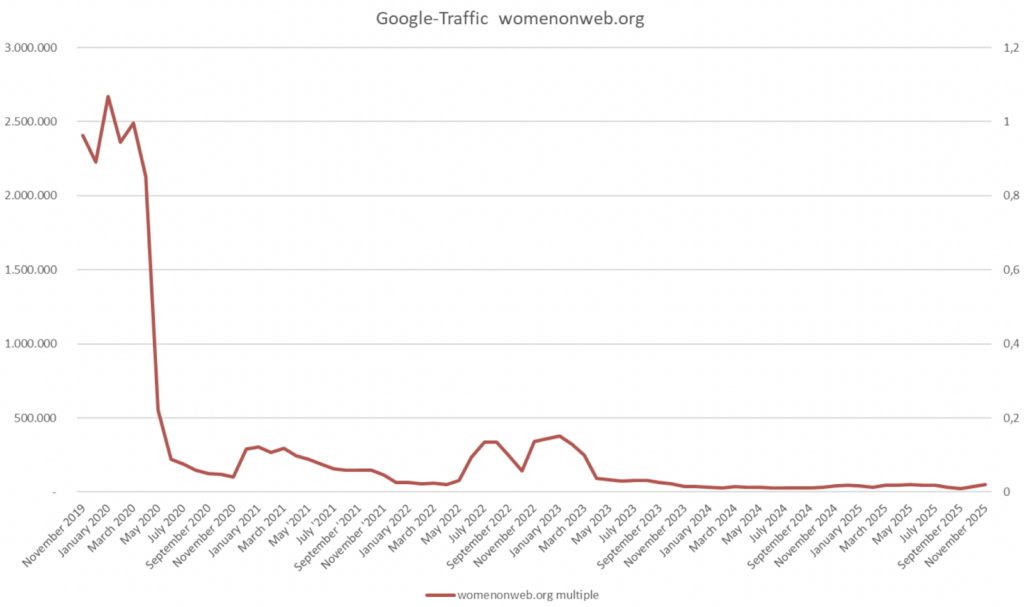

The presentation documents a layered experience of suppression. In some countries, abortion information is directly blocked at the ISP level (the WoW website and its mirrors are blocked in countries including China, Iran, the Philippines, South Korea, Spain, and Turkey). In others, filtering is subtler but equally consequential: shadowbanning on social media, advertising prohibitions tied to prescription services, and sudden traffic collapses following search engine algorithm updates. WoW reports being filtered out by Bing and losing visibility after Google core updates, despite continuing demand for its services. The effect is rarely outright deletion. Instead, it is demotion, disappearance from top results, or exclusion from specific keyword searches—forms of suppression that are difficult to detect but devastating in practice.

This invisibility is particularly acute in search environments. Hannes-Jeremia highlights cases where correctly spelled queries containing the word “abortion” produced filtered or incomplete results on Bing, while misspelled versions of the same query returned more comprehensive listings. Such behavior suggests keyword-based filtering rather than neutral ranking logic. In urgent situations—when someone types “I need an abortion” into a search bar—these ranking differences are not abstract technical quirks. They shape whether medically accurate, safe information appears at all.

Search engines justify heightened scrutiny of medical content through frameworks such as “Your Money, Your Life” classifications and E-A-T principles (expertise, authoritativeness, trustworthiness). Abortion information is treated as highly sensitive content. Yet Hannes-Jeremia argues that these governance mechanisms function as de facto moral filters, redistributing visibility according to opaque criteria. Traffic graphs show sharp drops following algorithmic updates, not because user demand declined, but because ranking systems changed. In this environment, legitimacy is algorithmically conferred—and algorithmically revoked.

The gendered dimension of this invisibility is central. Women seeking abortion information often do so under conditions of fear, urgency, and secrecy. Their search behavior may be cautious, brief, and emotionally charged. If reliable providers are algorithmically buried, users are more likely to encounter misinformation, anti-abortion crisis centers, or unsafe alternatives. The harm is therefore indirect but material: it lies in the friction inserted between the search query and medically accurate information.

WoW’s response is technical, adaptive, and infrastructural. It relied on the tools provided by eQualitie such as the eQpress for hosting and Delfect for DDoS protection. It has developed anti-censorship blogs optimized for search engines, embedded contact details directly into search snippets, replicated content through Wikipedia entries and mirror sites, and monitored performance through search analytics tools. The strategy reflects a clear understanding that visibility must be engineered within algorithmic systems that privilege link structures, user behavior metrics, and perceived authority signals. The goal is not merely to publish information, but to survive ranking systems.

At a broader level, Hannes-Jeremia reframes censorship as multi-layered: even when content is technically accessible, it may be algorithmically marginalized. The battleground has shifted from state bans to platform governance and search engine logic. Women’s reproductive autonomy and digital self-determination now intersect with ranking factors, moderation policies, advertising restrictions, and opaque filtering mechanisms. The conclusion is not only about abortion rights but about information power. As targeted attacks on online abortion resources increase, ensuring discoverability becomes as critical as ensuring legality. The experience described is not one of dramatic takedowns, but of gradual erasure—of reliable medical knowledge slipping just out of reach at the very moment it is needed most.

The Age of Cognitive Sovereignty

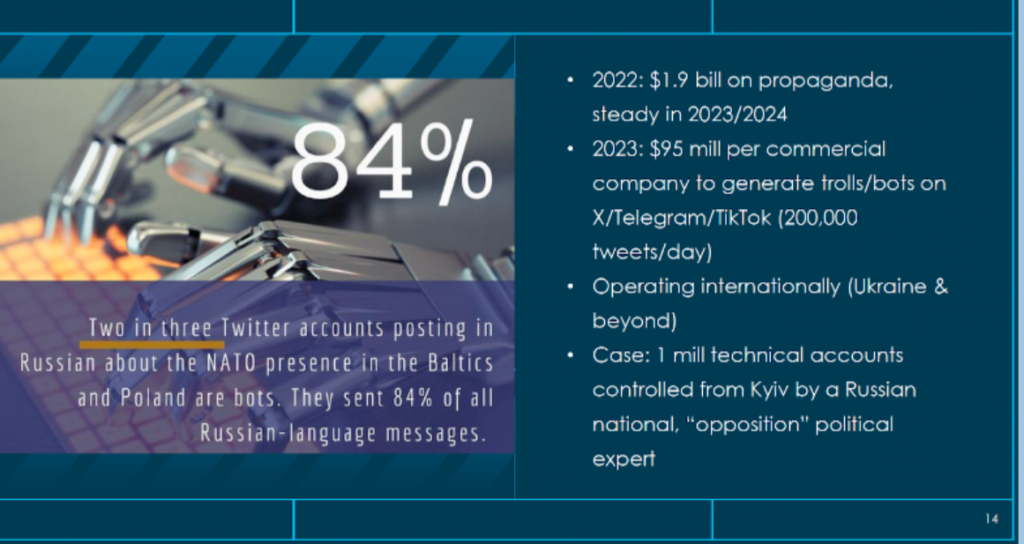

In her presentation, Magdalene Karallis’s (DISARM Foundation) reframes internet fragmentation not primarily as an infrastructural or regulatory problem, but as a cognitive one. In the “Splinternet era,” fragmentation increasingly manifests at the level of meaning, perception, and interpretation—what the presentation calls the semantic or psycho-cognitive layer of cyberspace.

The Splinternet fractures not only technical networks, but shared realities. Conflict-driven influence operations, AI-enabled manipulation (including deepfakes and leader impersonations), cross-platform disinformation ecosystems, and opaque recommender systems have produced parallel interpretive worlds. Fragmentation therefore operates not only through routing paths or market segmentation, but through narrative divergence: different publics inhabiting incompatible semantic environments.

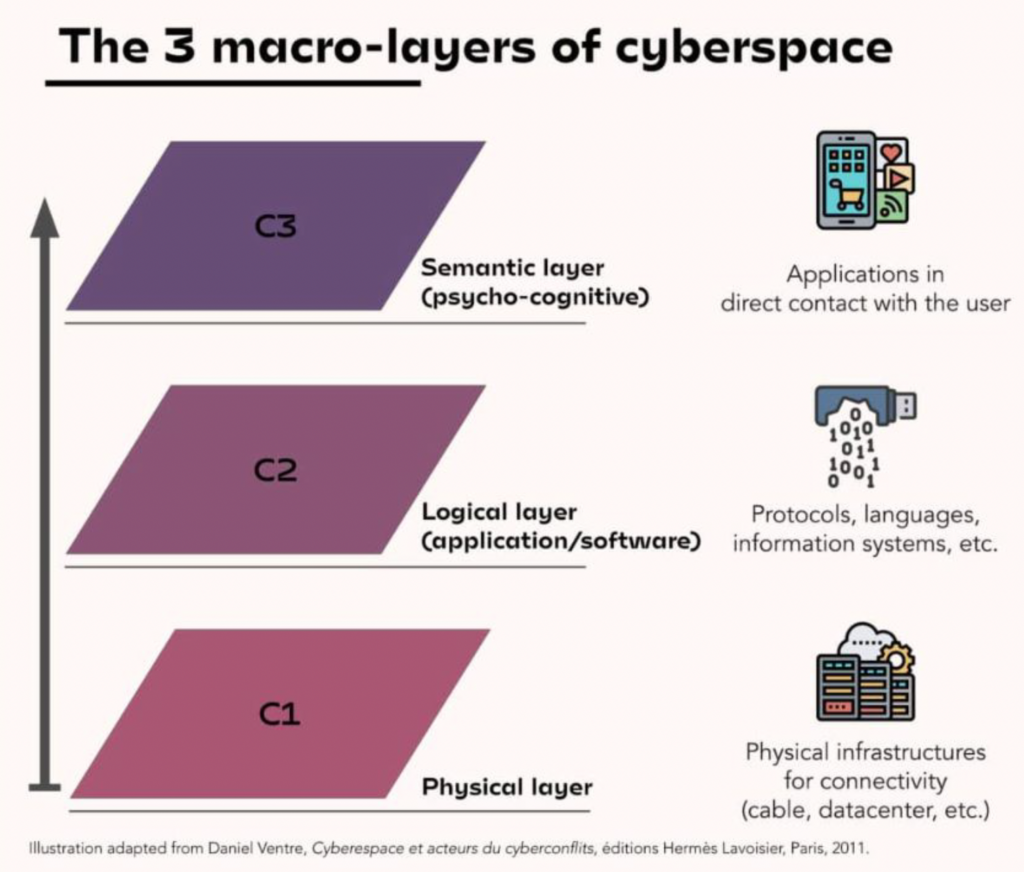

To conceptualize this shift, Magdalene draws on a three-layer model of cyberspace: the physical layer (infrastructure), the logical layer (protocols and software), and the semantic layer (direct user interaction and psycho-cognitive effects). While traditional analyses of internet fragmentation focus on the first two—data localization laws, DNS sovereignty, protocol bifurcation, platform monopolies—Karallis argues that the decisive battleground now lies in the third. The semantic layer shapes how individuals interpret events, evaluate threats, and construct political meaning.

This is where cognitive sovereignty becomes central. It is defined as the ability to interpret threats independently—to resist mass misinformation mobilization, platform-driven visibility biases, echo chambers, and socially engineered divides created by domestic or foreign influence networks. In this formulation, sovereignty is no longer confined to territorial control over infrastructure; it becomes a capacity at the level of the individual mind.

This reconceptualization changes how fragmentation should be measured. If the analytical focus remains solely on cables, routing tables, regulatory regimes, or economic blocs, one may overlook the fragmentation of interpretive capacity. Cognitive fragmentation manifests when individuals’ threat perceptions, political judgments, and emotional responses are systematically shaped by coordinated influence operations. The body and personality—attention, affect, trust, fear—become the sites where fragmentation materializes.

The presentation identifies a coordination problem across governments, platforms, researchers, and civil society: similar behaviors are labeled differently; investigations are conducted in incompatible formats; influence techniques are observed repeatedly without shared mapping. This semantic disunity weakens resilience precisely at the cognitive layer. Fragmentation is thus reproduced analytically: even responders lack a shared vocabulary.

DISARM offers a response to this problem. Inspired by the MITRE ATT&CK framework but adapted for influence operations, DISARM provides a structured taxonomy of tactics, techniques, and procedures applicable across regions and conflicts. It offers consistent tagging, machine readability (STIX/OpenCTI compatibility), and interoperability with EU and NATO threat-intelligence formats. The goal is not merely classification, but alignment—creating a shared semantic infrastructure for identifying and comparing influence operations globally.

Indeed, cognitive sovereignty depends on shared analytical standards. When responders across sectors use incompatible vocabularies, they inadvertently reinforce fragmentation. A standardized mapping system enables rapid aggregation of activity across platforms and conflicts, pattern recognition (“same tactic, new theater”), and interoperable workflows that do not rely on shared physical infrastructure . In this way, standardization becomes a defensive mechanism at the semantic layer.

The case studies—election interference in the Sahel and fact-checking organizations confronting Russian disinformation campaigns—demonstrate how shared terminology and structured mapping enable coordinated response and resilience building . Through training models, study guides, and cross-sector collaboration, DISARM aims to build what the presentation calls a “Europe-driven analytical standard for the semantic layer” .

The deeper analytical shift lies in recognizing that the Splinternet is not only a geopolitical or infrastructural phenomenon. It is a psychological one. Influence operations target perception, memory, identity, and emotional triggers. AI-enabled manipulation intensifies this by creating synthetic media that directly engages sensory perception. Platform recommender systems shape attention patterns and emotional reinforcement loops. Fragmentation thus penetrates the body: stress responses, polarization dynamics, trust erosion, and identity hardening.

By foregrounding cognitive sovereignty, the presentation suggests that measuring fragmentation requires examining how individuals experience reality—whether they can independently interpret information without being captured by engineered narratives. The unit of analysis shifts from networks and markets to interpretive autonomy. The “response layer” must therefore be standardized before fragmentation hardens irreversibly.

In this framework, cognitive sovereignty is both a diagnostic and a normative goal. It reorients internet governance debates from infrastructure control toward the preservation of interpretive agency. Fragmentation becomes measurable not only in routing paths or regulatory regimes, but in the degree to which populations retain the psychological capacity to evaluate information critically, resist manipulation, and maintain coherent shared realities.